The conferences. Canada, Winter 2018.

I took a train to Toronto to join a small self-organizing conference. Outside the windows, the landscape changed dramatically from white to red. It was just a few hours away but felt like moving to a completely different world. On the first day, I took a short walk around the University of Toronto and visited an eating hall which was the model of Harry Potter’s Great Hall. Hooray!

The conference was started by a former PhD student who was the first author of Generative Adversarial Network. I always thought he was in the US after two years, then suddenly he showed up along with the conference in Canada. A self-organizing conference means that we have an open agenda. Participants were encouraged to have their own circle. If they could not find a way, the kitchen was always welcomed. This was also how the cat neuron was born, which started a whole project by Google in 2012.

After a few years, Generative Adversarial Networks became a well-known idea with hundreds of cited articles. Despite some technical problems, the results were impressive. Those showed a different kind of art with a great sense of super realism that amazed the top most critics. People started to question creativity, art and what made humans special.

However, somebody else in the room thought in a very completely different way. He was the most relentless researcher in this area with more than a half decade working on psychology and computer science. Different from the rest of the crowd, he followed his own intuition when the world completely abandoned the idea of AI. In the conference, he still talked about why deep learning could not be as good as human vision. His favorite toy example is about 3D rotation of an object to illustrate how great humans are at learning the invariant representation.

I just felt super lucky. Long time ago I lost interest in computer science and spent most of my time trying to dig deep into psychology and neuroscience. By an accident, I jumped into an unplanned field of research, read a paper, and dropped out. As a result, I had to upgrade myself more like a data engineer working on fancy tech. In four years of undergraduate, I had always avoided coding as much as possible. It was always an unpleasant feeling when trying something that you did not enjoy very much. There was a part of your brain saying “Pain! Pain! Pain!” - Yes indeed it was a real physical pain and an experience much less joyful than solving a math puzzle. It was similar to traveling - a bunch of paperwork that I had to get done. But it was the only option to push through, and here was how I got there.

The gift I had was an ability of imagination. It used to work pretty well when I tried to solve a difficult math puzzle. A bunch of random things jump up and down in my head and urges me to write it down. Sometimes, it was like a fast train of connected ideas with no sense order, but then when looking back, it turned out to be something completely different. Sometimes it is a flash of interesting things that I have never seen or a short video of time and space traveling. Sometimes it was a prediction of something that would happen in the future. Those can not be memorized - they are generated by my life experience. These are things that I do not think that machine would be able to capture. We just scratch the surface of what true human capacity can do. Think of speed reading, language learning, and reading in mind vs reading out loud. It was still so hard to make you understand all of these by your experience. Think of how babies learn how to talk without any supervision.

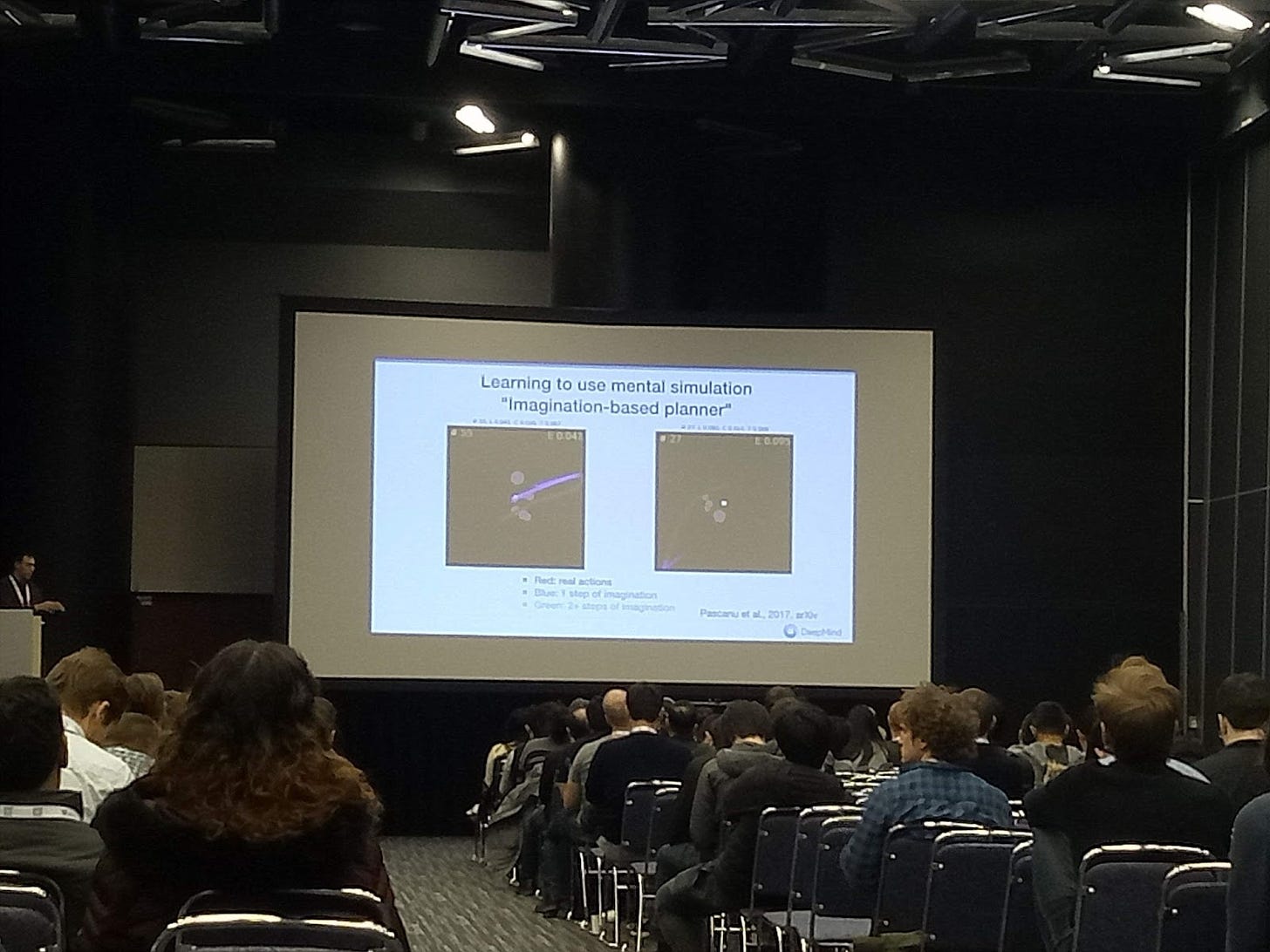

After the conference in Toronto, I went straight back to Montreal to attend another conference - the biggest one in academic research. That year the industry decided to jump in. They opened the first open expo right before the whole event. It was like a rock concert. Tickets were sold out in ten minutes. I could not buy any tickets, but thankfully I could enter some other workshops and meet some interesting people.

Machine learning always looks like a joke to me. The high level ideas are you give a machine a task and instruct it how to get it done. At that time, there were three main branches, e.g. supervised learning, unsupervised learning and reinforcement learning. Supervised learning is about explicit instruction with a sample of data. Reinforcement learning is about learning in an environment that includes a policy and a set of actions. Unsupervised learning is more like a placeholder name for an un-classified group of algorithms.

I contributed my first step to a deep frustration of my undergraduate supervisor with the clustering method. Somehow it did not make sense to him and he decided to jump into mathematics. I was personally fascinated in neuroscience and tried everything that I could to learn more about this field. That ended up as my internship in France, however, due to family issues, I chose Singapore as the place to do research and found out the paper of Generative Adversarial Network. Since then, I bounced around the research world to help refine that idea and eventually ended up at Montreal. All I did was just guess the next step that made sense to me. Sitting in the conference as nobody, I often felt like people were talking about me - not my research but my story. All I did was just follow an inner call.

A few years ago before my departure from San Francisco, I built a web app called Doko. In Japanese, it means Doko e ikimasuka? Where would you go? I left a part of my psychometric knowledge and the direction of research there. Wrap them up in an interesting language that everybody could understand. It was about self-learning algorithms - another bet.

It’s time back to the city.